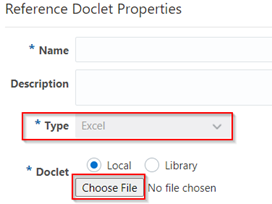

So, what exactly is embedded content? Well, it all starts with the common report content that’s created by those reference doclet authors and kept in a reference doclet. This content then becomes available for other doclet authors to use.

Now in the past, when we embedded a report into the report package, note and text boxes were nowhere to be found. It was a bit of a hassle to make those notes and text boxes available in the report package.

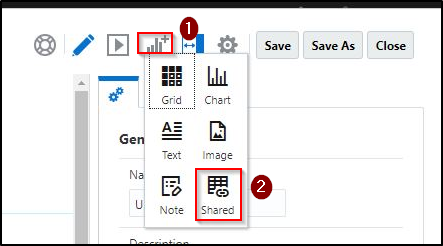

With the new and improved Report Reference doclets, any Text or Note boxes in the Report can be designated as available content. This means that regular doclet authors can now easily include Text and Note boxes in their reports, just like they would with grids and charts.

Financial reporting is a big deal for most companies. You’ve got your internal reports for things like board packages, management updates, or quarterly updates. And then you’ve got external reports, like statutory, regulatory, filing, or annual reports. No matter what kind of report it is, the important part is gathering accurate information, double-checking it for accuracy, and presenting it in a way that makes sense for businesses.

How it works:

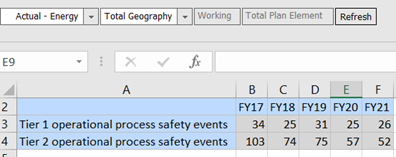

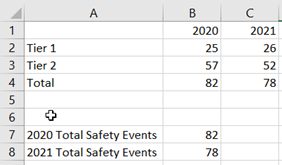

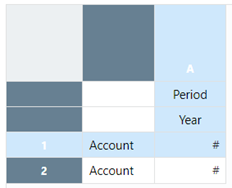

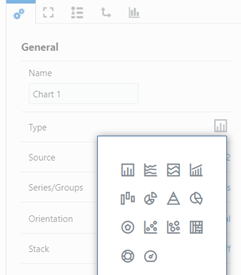

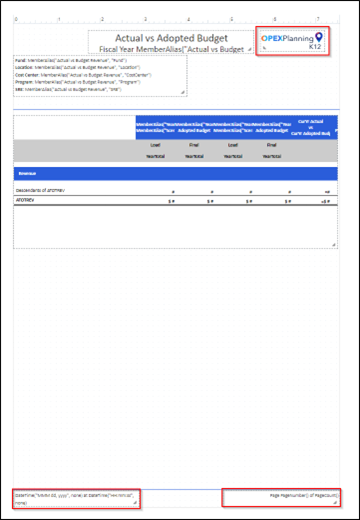

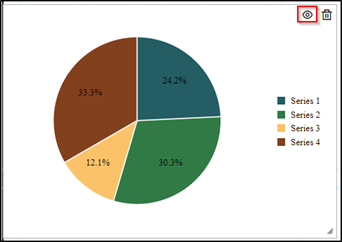

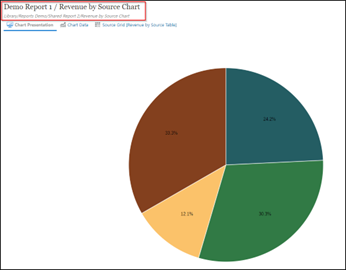

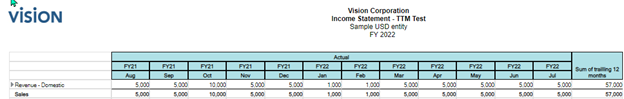

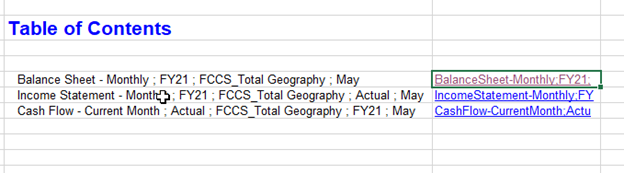

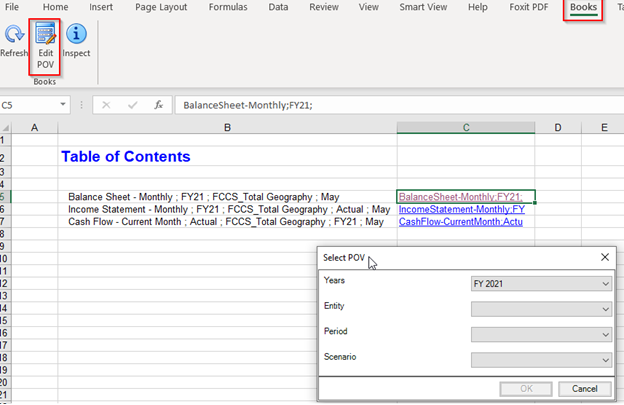

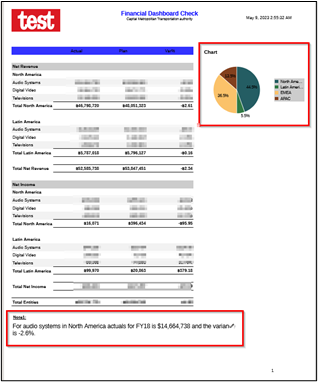

Here’s a report that has logos in the header and footer text, which adds a nice touch. And that’s not all – it’s packed with all sorts of elements like grids, charts, notes, and text boxes.

Now, we’re going to embed this awesome report into the report package. But, before we do that, we’ll make sure to include a grid, chart, and some notes in the package as well.

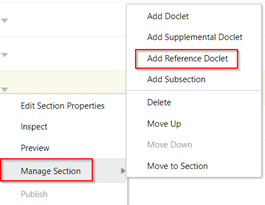

Oh, and one more thing! We’ll add this report as a reference doclet in the report package. That way, it’ll be easily accessible and can be used as a point of reference for other reports.

So, get ready to jazz up that report package with this fantastic report and make it even more informative and visually appealing!

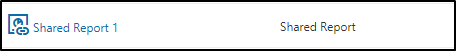

Reference doclet authors upload and check in reference doclets, thus making the report content available to doclet authors.

Here is the link for your reference:

About Embedded Content from Reference Doclets

Add the note box to the report package and make it available:

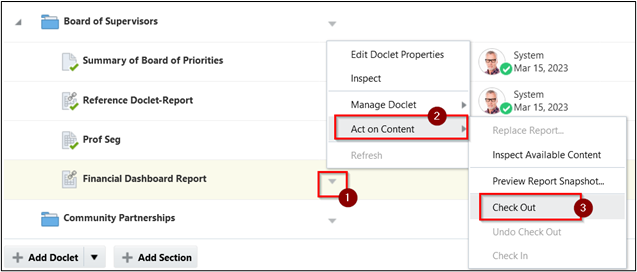

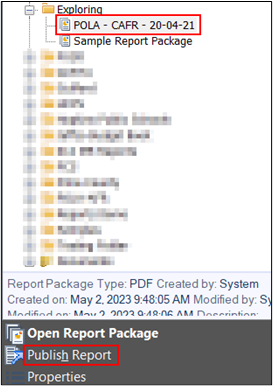

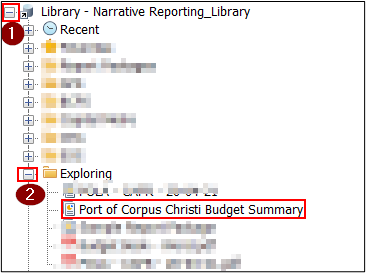

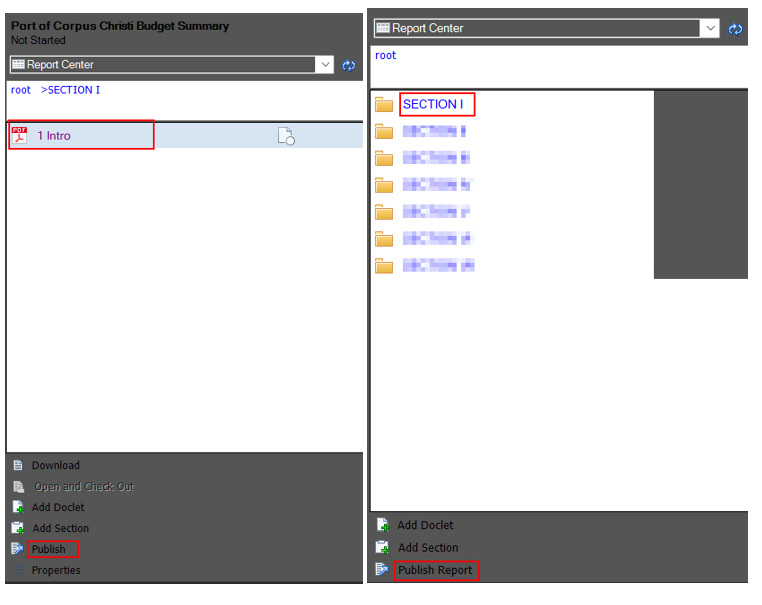

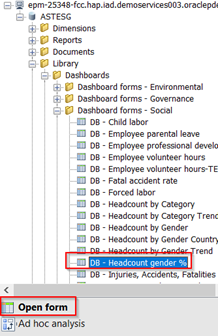

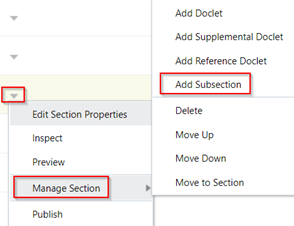

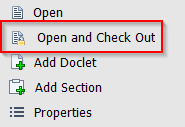

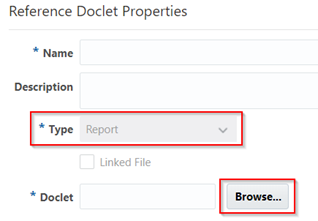

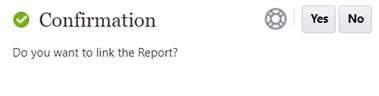

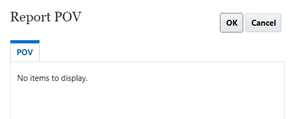

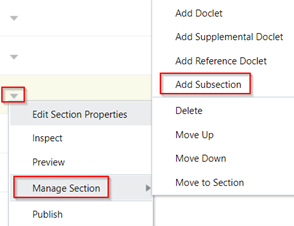

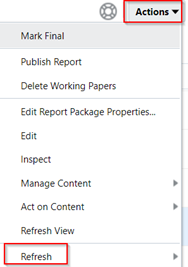

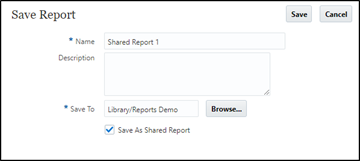

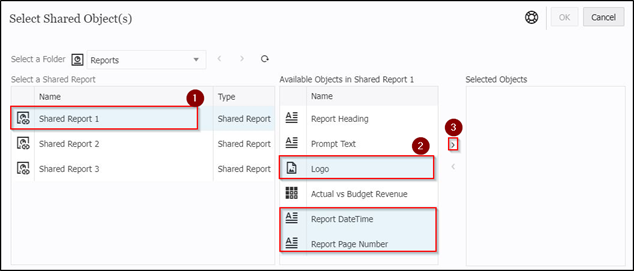

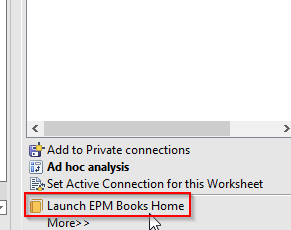

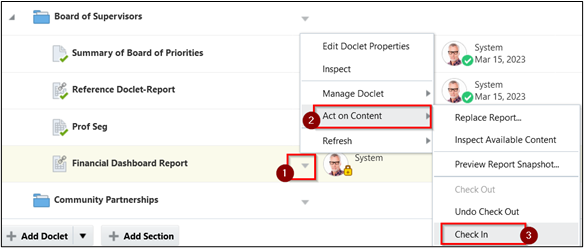

1. Select the report which was been added as a reference doclet.

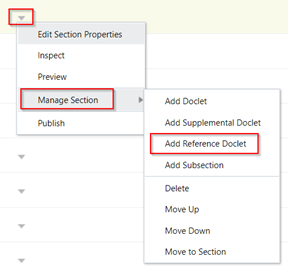

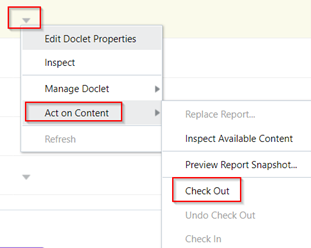

2. Click on the “ down arrow,” which is next to the selected report.

3. Click on “Act on Content” > Check Out

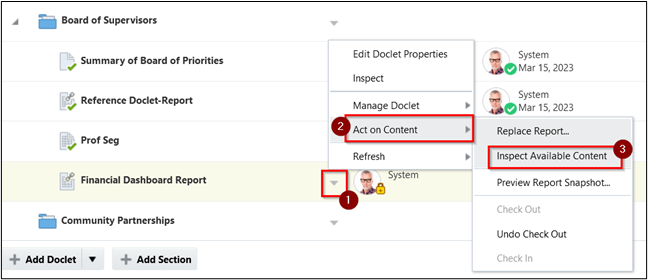

4. Click on the “ down arrow” which is next to the selected report.

5. Click on “Act on Content” > Inspect Available Content

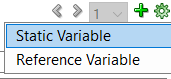

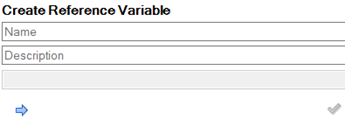

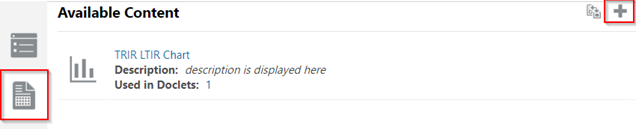

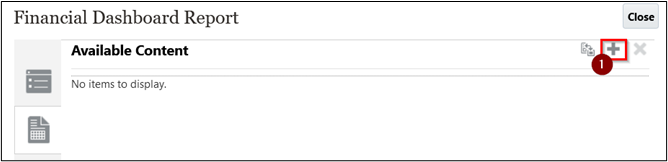

6. On the Available Content Screen, click on “+” to add.

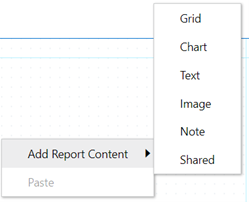

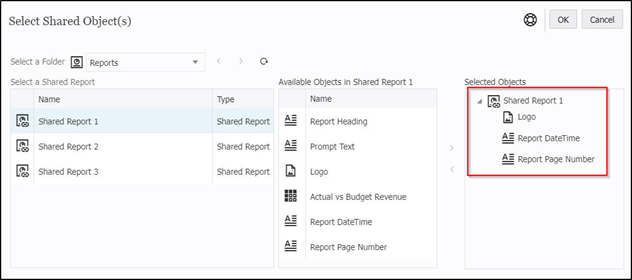

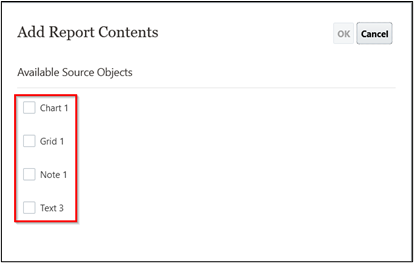

7. Add Report contents pop-up, to add Chart, Grid and Note.

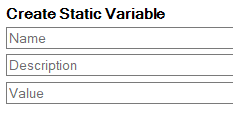

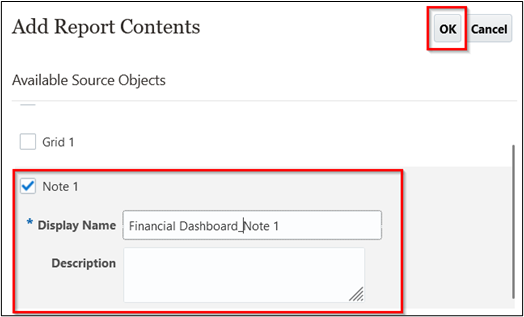

8. Check the box in front of Note 1, and enter the display name and description for it.

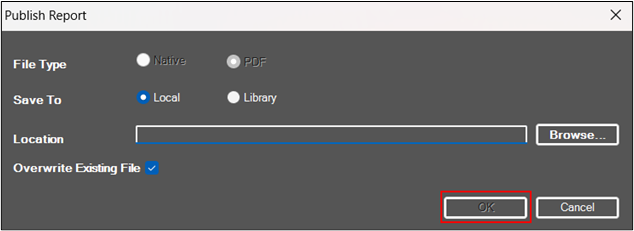

9. Click OK

10. Click on Close

11. Click on the “ down arrow” which is next to the selected report.

12. Click on “Act on Content” > Check In

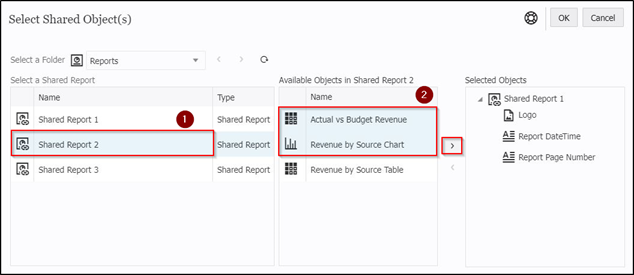

Once the content is made available, now embed the note in the standard doclet:

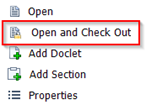

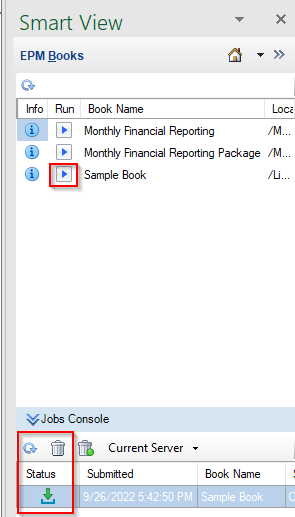

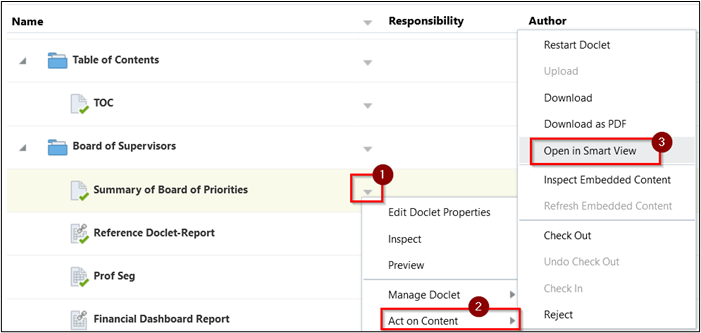

1. Select the doclet where you need to embed the note box.

2. Click on the “ down arrow” which is next to doclet.

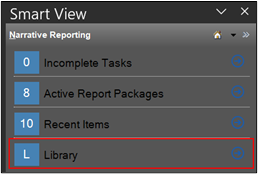

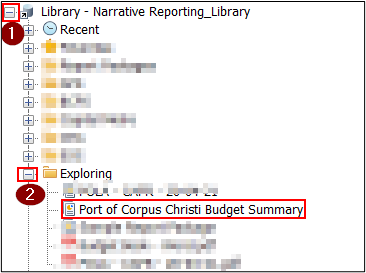

3. Click on “Act on Content” > click on “Open in Smart View”.

4. Enter the login credentials.

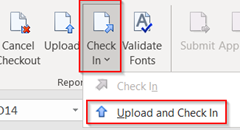

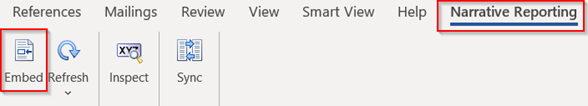

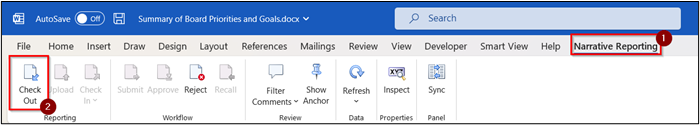

5. Go to the Narrative tab in MS-Word, click on Check Out

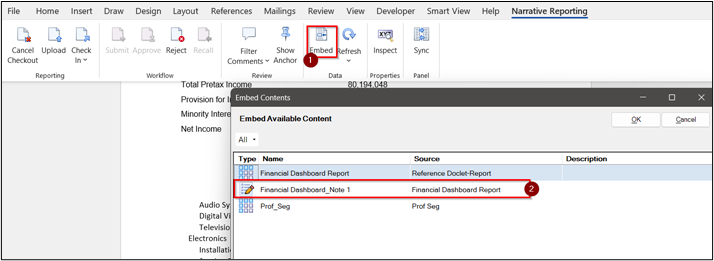

6. Click on Embed.

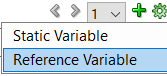

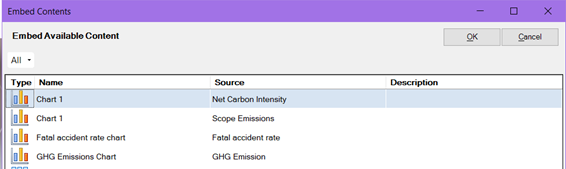

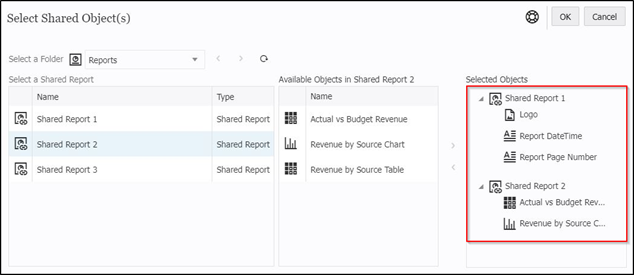

7. On the Embed Available Content pop-up, there will be a Financial Dashboard_Note 1 in the list which will be made available from the reference doclet.

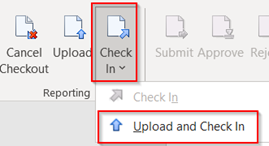

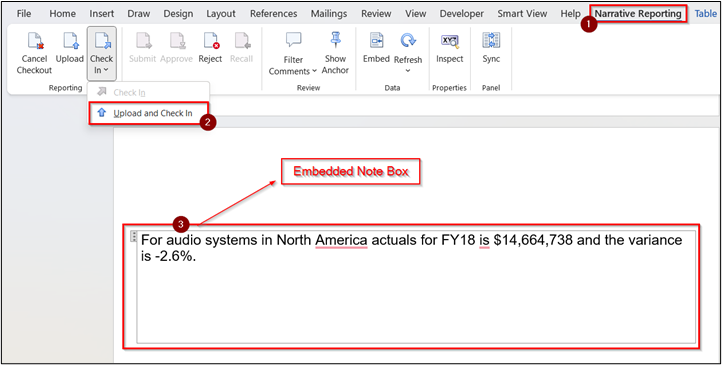

8. Click on Upload and Check In from the Check In drop-down. Preview the final version of the doclet that the note box is been embedded in the standard doclet.

Important considerations

There are quite a few considerations when inserting or removing reports-based content in a doclet. Oracle documentation covers it.

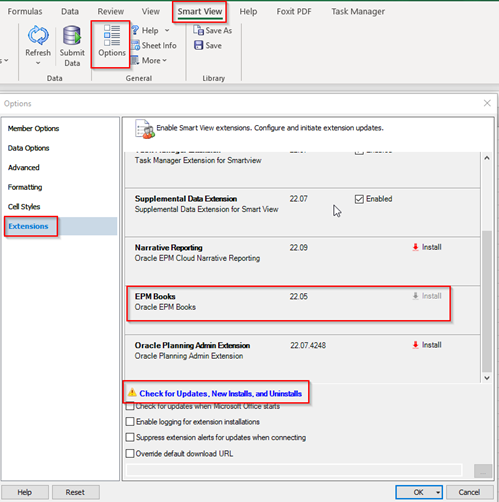

To use Smart View to embed Text or Note boxes in Regular doclets, install the 23.04 or later version of the Narrative Reporting extension for Smart View.

In conclusion, the reporting series on how to add notes and text boxes as available content has provided valuable insights and practical guidance for enhancing the presentation and organization of information.

We explored the importance of incorporating notes and text boxes to enrich the context, highlight key details, and improve communication.